What we found was a good estimate for the best fitting parameters given our function. If we found the smallest SSR, does that mean we found the perfect fit? Unfortunately not. We saw that this process can fail, depending on the function and the initial parameters, but let’s assume for a moment it worked. The parameters that give the smallest SSR are considered the best fit.

Its whole purpose is to find the parameters that give the smallest value of this function, the least square. In fact this is what curve_fit optimizes. We take the difference between our data ( y) and the output of our function given a parameter set ( fy). How do we calculate the error between our data and the prediction of the fit? There are many different measures but among the most simple ones is the sum of squared residuals (SSR). So to compare the goodness of different parameters we need to compare our fit to the data. If we did, we would not need to do fitting at all. In most research setting we don’t know our exact parameters. In more realistic settings we can only compare our fit to the noisy data. But what does it mean for a fit to be better or worse? In our example we can always compare it to the actual function. In more subtle cases different initial conditions might result in slightly better or worse fits that could still be relevant to our research question. This is an extreme case, where the fit works almost perfectly for some initial parameters or completely fails for others. The key point is that initial conditions are extremely important because they can change the result we get. With an initial parameter of -5 for tau we get good parameters of -30.4 for tau and 20.6 for init (real values were -30 and 20). Popt, pcov = _fit(exp_decay, x, y_noisy, p0=p0)Īx.set_title("Curve Fit Exponential Growth Good Initials") Let’s see what happens when we choose a negative initial value of -5. So what happens if we choose better parameters? Looking at our exp_decay definition and the exponential growth in our noisy data, we know for sure that our tau has to be negative. Starting from 1, curve_fit never finds good parameters. So what happened? Although we never specified the initial parameters ( p0), curve_fit chooses default parameters of 1 for both fit_tau and fit_init. For a real_tau and real_init of -30 and 20 we get a fit_tau and fit_init of 885223976.9 and 106.4, both way off. We will change our tau to a negative number, which will result in exponential growth. That makes it very easy for the method to stop with bad parameters if it stops in a local minimum or a saddle point. When changing the parameters shows very little improvement, the fit is considered done. Instead, it starts with the initial parameters, changes them slightly and checks if the fit improves. The initial parameters are very important because most optimization methods don’t just look for the best fit randomly.

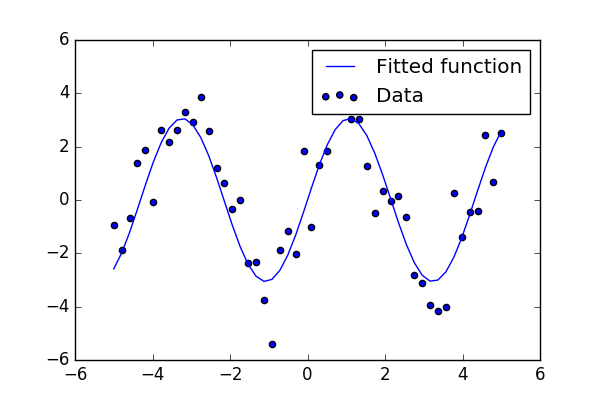

The initial parameters of a function are the starting parameters before being optimized. So why would this every fail? The most common failure mode in my opinion is bad initial parameters. Of course the accuracy will decrease with the sampling. Below is a fit on 20 randomly chosen data points. It also works when the sampling is much more sparse. Technically this can work for any number of parameters and any kind of function. From the call signature of def exp_decay(x, tau, init) we can see that x is the input data while tau and init are the parameters to be optimized such that the difference between the function output and y_noisy is minimal. All other arguments are the parameters to be fit. The first argument must be the input data. The function we are passing should have a certain structure. All we had to do was call _fit and pass it the function we want to fit, the x data and the y data. We get 30.60 for fit_tau and 245.03 for fit_init both very close to the real values of 30 and 250. Our fit parameters are almost identical to the actual parameters. # Sample exp_decay with optimized parametersĪx.set_title("Curve Fit Exponential Decay") # Use _fit to fit parameters to noisy data Noise=np.random.normal(scale=50, size=x.shape) # Sample exp_decay function and add noise